OpenAI closes reasoning gap in voice agents

Read Online | Sign Up | Advertise

Good morning, {{ first_name | AI enthusiasts }}. Typing made AI useful, but speech is where agents have to prove they can keep up with real life.

OpenAI’s new real-time voice model trio is built for that messier interface, adding a major reasoning upgrade, the ability to talk while thinking, and capable tool use that moves AI voice agents closer to running tasks at the speed of natural conversation.

In today’s AI rundown:

-

OpenAI’s reasoning upgrade for voice agents

-

Google folds Fitbit into its AI health play

-

Test multiple AI models with same prompt

-

Anthropic plans for AI that builds itself

-

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

OPENAI

🗣️ OpenAI’s reasoning upgrade for voice agents

Image source: OpenAI

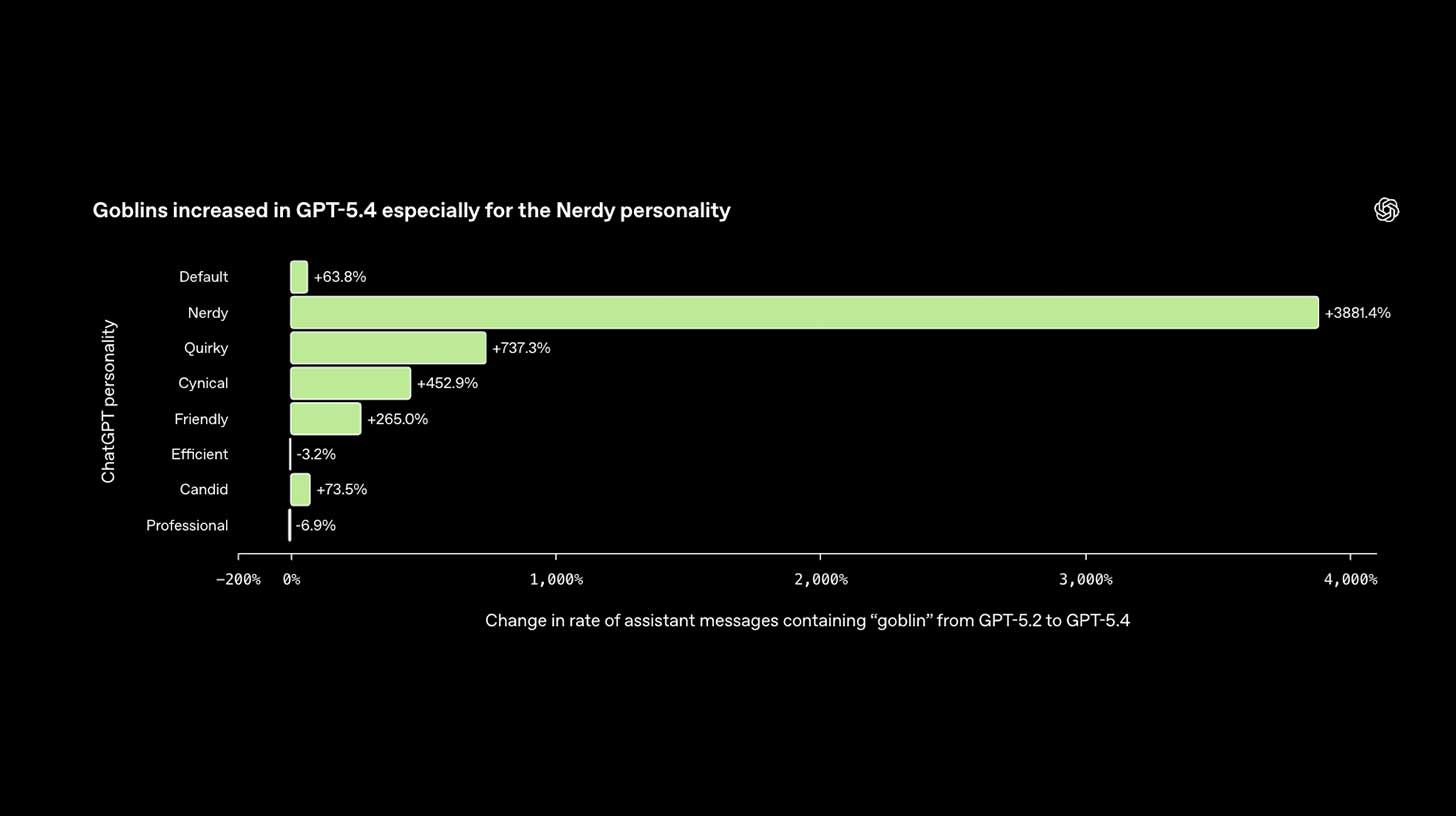

The Rundown: OpenAI just introduced GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper, three API voice models that bring new reasoning, streaming, tool use, realism, and more capability upgrades to AI voice agents and live speech.

The details:

-

Realtime-2 brings GPT-5-level reasoning to live speech, is able to use multiple tools at once, talks while it thinks, and has better tone control for realism.

-

On Big Bench Audio, Realtime-2 hit 96.6% vs. 81.4% for its predecessor, a 15-point jump in how well voice AI can reason in real-time.

-

OpenAI also shipped a live translator covering 70+ languages and a streaming transcription model, rounding out a full voice-agent toolkit.

-

OAI said Zillow, Priceline, and Deutsche Telekom are already building on the models for real estate AI agents, voice-managed travel, and customer support.

Why it matters: AI voice’s turn-based era appears to be nearing a close, with OAI’s new model moving to systems that can reason better, leverage tools, and complete workflows without awkward interruptions that take users out of a natural flow. The AI industry is fixated on text agents, but the next wave will be spoken to, not typed at.

TOGETHER WITH AWS MARKETPLACE

📊 15+ enterprise leaders on getting data AI-ready

The Rundown: AWS Marketplace just released a free book featuring 15 chapters from senior data and AI leaders at JPMorgan Chase, Siemens, Mercedes-Benz, Roche, and more — each sharing practical advice on building the data infrastructure needed for agentic analytics and intelligent agents.

Chapters cover topics including:

-

Evolving data strategy for agentic AI and scaling data products

-

Building on existing infrastructure with a pragmatic, business-first approach

-

Unlocking value with classical ML, semantic layers, and cross-team alignment

-

Real-world perspectives from leaders across different industries

Get your free digital copy today.

⌚️ Google folds Fitbit into its AI health play

Image source: Google

The Rundown: Google opened its AI health coach to the public after months in beta, integrating the Fitbit app into a new Google Health platform and pairing it with a new $99 screenless tracker that tracks and transmits body data to the AI.

The details:

-

Running on Gemini, the AI coach can tailor weekly workout routines, interpret uploaded medical records, and ID what a user ate from a phone photo.

-

Google is consolidating the Fitbit app, Health Connect, Apple Health, wearable data, and U.S. medical records into a single Google Health hub.

-

The new $99 Fitbit Air has no screen and weighs just 12g, carrying heart rate, oxygen, and temperature sensors that provide body data to the AI coach.

-

Apple Watch, Garmin, and Oura owners are set to get AI coach access later this year, with Google opening it up to hardware outside of its own.

Why it matters: AI’s role in personal health is only growing, and integrating everything under one roof can help Google make the AI layer the core product while also owning a trusted wearable line that provides users with the personalized guidance and context typically missing from other trackers and less connected options.

AI TRAINING

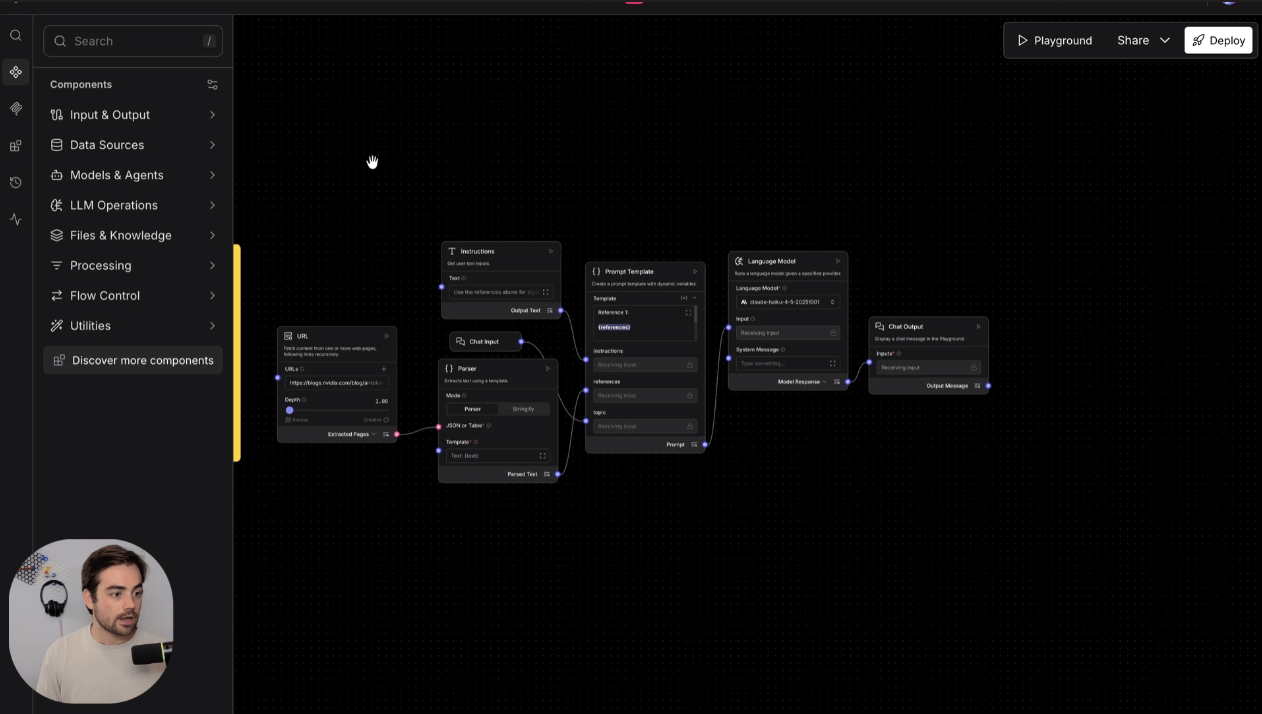

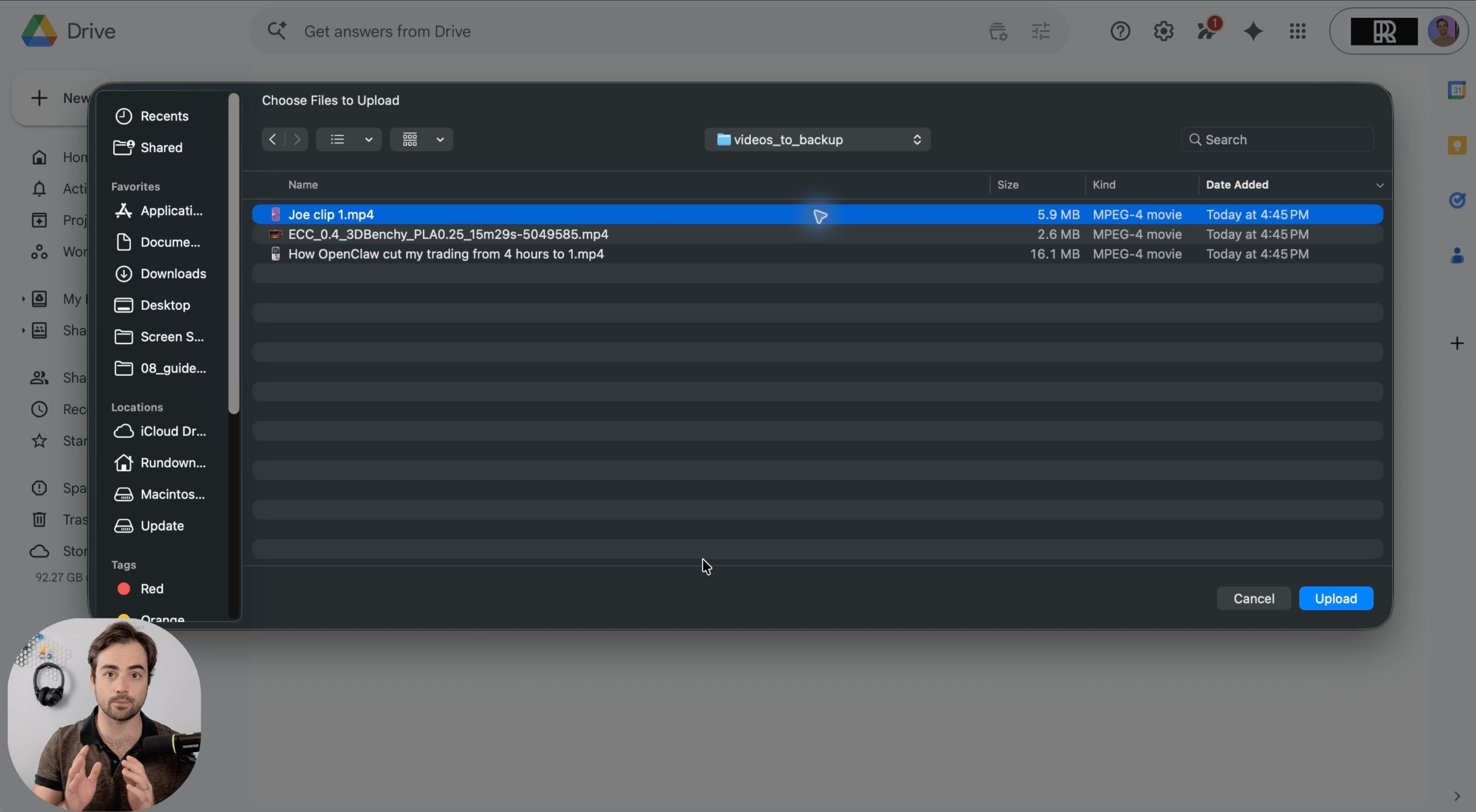

✏️ Test multiple AI models with same prompt

The Rundown: In this guide, you will learn how to use OpenRouter Fusion to test the same prompt across multiple AI models at once. Instead of opening five apps and guessing, you can compare outputs side by side and build a quick cheat sheet for work.

Step-by-step:

-

Create an OpenRouter account, open OpenRouter Fusion, and pick how you want to pay for AI usage — OpenRouter credits or API keys you already pay for

-

In Fusion, pick the models you want to compare — we tested Opus 4.7 vs. GPT 5.4 vs. Grok — and run one benchmark prompt at a time, keeping it identical

-

Prompt something like: “You are advising a 20-person SaaS company deciding whether to replace its weekly status meeting with an async written update. Write a recommendation memo with 3 benefits, 3 risks, and a 2-week implementation plan. Keep it concise and practical”

-

Open the responses, read the side-by-side analysis, and note which model is strongest. In the demo, about 10 comparisons cost around 40 cents

Pro tip: Run a few prompts you use all the time, write which model wins each task, and use OpenRouter’s model browser to compare price and speed before you spend more.

PRESENTED BY WEIGHTS & BIASES

🐝 New guide: Tools and workflows to develop AI agents

The Rundown: AI agents can dramatically boost productivity and innovation, but getting them into the real world takes a lot of iteration. Whether you’re exploring agents for the first time or refining your current approach, this primer delivers actionable insights to help your team succeed and thrive in the AI era.

Get the guide to learn:

-

What defines agentic applications and why observability matters

-

A proven workflow for building agentic AI applications

-

How pioneering companies are building and deploying AI agents today

Download a primer on building successful AI agents.

THE ANTHROPIC INSTITUTE

🔬 Anthropic plans for AI that builds itself

Image source: Anthropic

The Rundown: Anthropic’s newly formed research arm, The Anthropic Institute, published its formal research agenda — a document that treats the possibility of AI systems improving themselves as something the company is actively preparing for.

The details:

-

TAI sits inside Anthropic, letting researchers study Claude usage, internal workflows, and security signals before they hit the wider market.

-

The Institute’s agenda spans security threats, economic disruption, governance, and planning for self-improving models.

-

The team also proposed Cold War-style hotlines between labs and governments, plus “fire drill” exercises for sudden capability surges.

-

TAI said it is committed to publishing Economic Index data, monthly worker surveys, threat research, and more details on its own internal AI-boosted R&D.

Why it matters: We wrote earlier about Anthropic co-founder Jack Clark’s blog on self-improving systems, and TAI’s research agenda puts it very much into focus. Anthropic’s talk of “fire drills” and Cold War-style systems is to prepare for an “intelligence explosion” that we might be heading to faster than many expected.

QUICK HITS

🛠️ Trending AI Tools

-

✈️ Serko.ai – The AI travel assistant that plans, books, and manages your entire business trip, so you can skip the busywork*

-

🗣️ GPT-Realtime-2 – Voice AI that thinks, calls tools, maintains convo flow

-

🎥 Studio Agent – ElevenLabs’ AI editor to draft videos, places sound effects

-

🎆 Grok Imagine Quality Mode – xAI’s Image generation with higher realism

*Sponsored Listing

📰 Everything else in AI today

Spotify launched ‘Personal Podcasts’, a tool allowing agents to turn items like briefings or class notes into a personal podcast directly inside users’ Spotify libraries.

OpenAI introduced Trusted Contact, an opt-in ChatGPT feature that alerts a designated friend or family member if signs of self-harm risk are detected.

Scale AI landed a $500M Pentagon contract for military data analysis, marking a 5x jump from last September’s $100M deal.

Perplexity rolled out its Personal Computer to all Mac users, allowing it to take agentic action across a user’s local computer, files, and via the Comet browser.

Mozilla published a blog about using Claude Mythos Preview for security, saying the model patched more bugs in April than the past 15 months combined.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Tatiana B. in San Francisco, CA:

“I’m COO of a startup and mom to a two-and-a-half-year-old. Managing both is a lot, and keeping track of everything I need to do at home on top of work can be a real mental drain.

So I use AI to help me compile a document covering everything I need help with at home: my daughter’s meal preferences, her daily routine, and house chores. I treat it like a work project, going back and forth with AI to think through what I actually need done, fill in gaps I hadn’t thought of, and get it all out of my head into something I can hand to someone else.

Now, when someone new comes to help at home, I don’t have to explain everything from scratch. That frees me up to actually be present with my daughter when I’m with her, and focused on work when I’m not. I also know I’m lucky to be in a position to hire help, but using AI to think clearly about what you need and get it out of your head is something anybody can do.”

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

-

Read our last AI newsletter: Anthropic, SpaceXAI become unlikely partners

-

Read our last Tech newsletter: GameStop’s wild bid to buy eBay

-

Read our last Robotics newsletter: Genesis robot makes breakfast

-

Today’s AI tool guide: Test multiple AI models with the same prompt, fast

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown